Two centuries of cranberry farming transformed thousands of acres of natural wetlands into artificially elevated agricultural fields in Southeastern Massachusetts. Today, scientists understand that this transformation came at a high cost to the environment. Now that the economics of the cranberry industry have made it less advantageous to farm in the region, the time is right for policies and funding that encourage farmers to consider restoration. Living Observatory was founded in 2011 as a learning collaborative of scientists, artists, and wetland restoration practitioners to document, interpret, and reveal aspects of change as it occurs prior to, during, and following the first few restoration projects in Massachusetts.

Several projects are currently underway and researchers of many disciplines are investigating what changes can be observed to quantify the benefits and limitations of cranberry bog restoration. One such project is being carried out by researchers at the MIT Media Lab's Responsive Environments Group. They have developed and implemented a sensor network that documents ecological processes and allows people to experience the data at different spatial and temporal scales. Small, distributed, low-power sensor devices capture climate, soil, water, and other environmental data, while others stream audio from high in the trees and underwater. Visit any time from dawn till dusk and again after midnight; if you're lucky you might just catch an April storm, a flock of birds, or an army of frogs.

More about the Responsive Environments Group

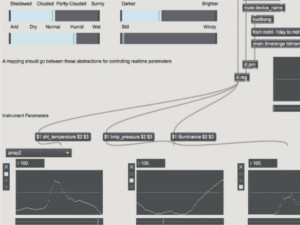

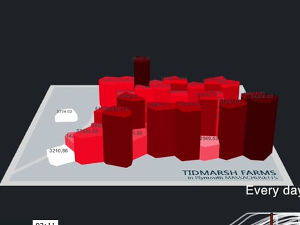

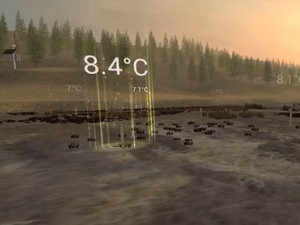

Many current projects in the group are making use of the Tidmarsh site and the data. The flagship project is a cross-reality sensor data browser constructed using the Unity game engine to experiment with presence and multimodal sensory experiences. We're looking for new ways to explore and experience data about the environment. Built on LIDAR-scanned terrain data, the virtual Tidmarsh experience integrates real-time data from the sensor networks with real-time audio streams and other media. The soundtrack is based on real-time sensor data—flashes and ukulele notes occur when new data comes from each sensor. The music is driven by the sensor readings: higher pitches indicate warmer temperatures, for example.

Navigating this Site

Using the links at the top of this page, you can view sensor data, see realtime camera feeds, and listen to live audio from several locations at Tidmarsh. Each cluster of sensors has its own page. A map view also lets you explore Tidmarsh and the surrounding area overlaid with live sensor data and other data layers.

The Herring site overlooks the stream channel, and you might spot river herring running in the spring. Other wildlife, including great blue herons, muskrats, otters, and deer make frequent appearances. The sensors nearby are outfitted with soil moisture probes that we are using to study the effects of microtopography (a restoration technique) at Tidmarsh.

The Impoundment site is the location of a former reservoir. Here, we have cameras and a large array of microphones. You can listen 24/7 (hover over the video and click the speaker to unmute). We are exploring ways that we can use live audio and our years-long archive of recordings to track the return of wildlife and the "health" of a wetland site after a restoration. You can also listen to the live audio at livingsounds.earth.

There are several other sensor sites, too, so be sure to explore the links at the top of the Data page!

Media

Publications

- Learning from the Restoration of Wetlands on Cranberry Farmland: Preliminary Benefits Assessment. Living Observatory and collaborators — December 2020.

- Sensor Networks for Experience and Ecology. (Ph.D. Thesis). Brian Mayton — September 2020.

- Deep Learning Locally Trained Wildlife Sensing in Real Acoustic Wetland Environment. Clement Duhart, Gershon Dublon, Brian Mayton and Joseph Paradiso. In Communications in Computer and Information Science, ISSN 1865:0929 Springer. September 2018. Best Paper Award - SIRS’18.

- Sensor(y) Landscapes: Technologies for New Perceptual Sensibilities. (Ph.D. Thesis). Gershon Dublon — June 2018.

- The Networked Sensory Landscape: Capturing and Experiencing Ecological Change Across Scales. Brian Mayton, Gershon Dublon, Spencer Russell, Evan F. Lynch, Don Derek Haddad, Vasant Ramasubramanian, Clement Duhart, Glorianna Davenport, and Joseph A. Paradiso. Presence: Teleoperators and Virtual Environments, 26(2), MIT Press, 2017.

- Resynthesizing Reality: Driving Vivid Virtual Environments from Sensor Networks. Don Derek Haddad, Gershon Dublon, Brian Mayton, Spencer Russell, Xiao Xiao, Ken Perlin, Joseph A. Paradiso. Presented at SIGGRAPH17 30 July - 3 August, Los Angeles, CA